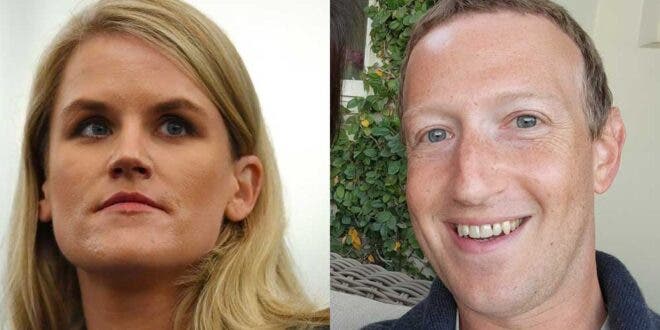

Scott speaks with Karen Hao the senior Artificial Intelligence editor for MIT Technology Review whose groundbreaking work looking into Facebook just got validated in a massive way this week by whistleblower Francis Haugen’s testimony to the U.S. Senate. Hao holds a mechanical engineering degree from MIT, which she took to outlets like Mother Jones and Quartz where she honed her craft as a data journalist before earning her current senior role and recently becoming a Knight Science Journalism Fellow.

Her groundbreaking reporting spans from the murky world of Facebook’s reliance on artificial intelligence to create programs that make autonomous decisions about what people see and human networks that convert disinformation for hire into cold hard cash.

Buzzfeed caught and exposed the pro-Trump Eastern European troll farms who polluted the 2016 election with pro-Trump disinformation, yet Karen reports that not only did Facebook allow people in those same countries to influence Americans with inauthentic content, but they paid them from the network’s Instant Articles advertising platform.

We asked Hao about her opinion on who bears responsibility for the state of social media in America today and here is her answer lightly edited for clarity:

“The tech companies, in particular, have a huge responsibility because they’re the ones that actually have visibility into this stuff. There is only so much research that external researchers can do to figure out whether or not a Twitter account is a bot or a Facebook page is a part of a troll farm but run by a troll farm. But there is infinitely more visibility within the tech companies by the employees themselves because they have access to look deeper at the data behind each of these pages and accounts.

So from that perspective, purely like they are the ones that need to be on top of [disinformation]. Either they need to be more transparent and get more people involved in trying to tackle these issues, or they have to take it on themselves.

And as we’ve seen with Facebook lately, they used to be much more open about sharing data, and it turns out that they’ve actually been obfuscating some of the data that they’re sharing to academics for research purposes. And they’re also shutting down a lot of the tools that academics previously used to try and understand these things.

But I do think that the government also needs to step in to actually regulate these companies, because if there are no consequences for letting this activity continue to persist on the platform, then these companies don’t actually have an incentive to do anything. Ultimately, in some cases, it’s more profitable to keep these accounts and these pages running on the platforms because they’re extremely viral, they’re extremely engaging, and it gets users coming back to continue reading stuff on Facebook and Twitter.

So I think both the [companies and the government] share some responsibility.”

The Dworkin Report Powering #TheResistance every day…

The Dworkin Report Powering #TheResistance every day…